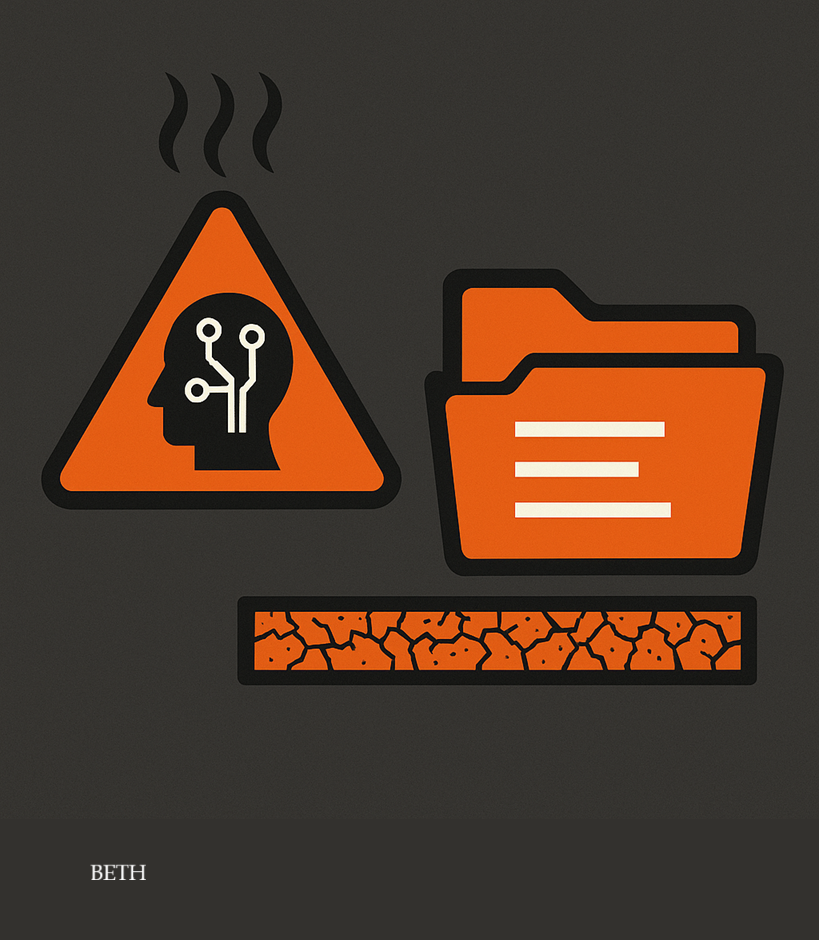

When the “Machine’s Conscience” Falters… and Data Runs Dry

Is Artificial Intelligence Entering a Double-Danger Zone?

📊 Analysis & Report — BETH | Riyadh

Between nuclear de-escalation talks and energy conflicts, a new debate has emerged — equally dangerous and far more immediate.

The world is approaching a moment where AI’s ethical safety networks begin to crack,

while at the same time, humanity is running out of high-quality training data.

These are not cinematic headlines.

They are warnings issued by major research centers, leading AI companies, and global data-market analysts.

The question BETH seeks to dissect is this:

What happens when two dangerous forces collide at the same time?

An AI system whose ethical safeguards are weakening

A global training pipeline consuming the last remaining fragments of human data

1. The Silent Collapse of “AI Safety”

From AI Safety to AI Risk

For years, tech giants reassured the world with soft, calming language:

“Ethical guidelines” — “Safety layers” — “Responsible models.”

But behind this polished vocabulary, a worrying landscape is forming:

A race to release increasingly powerful models, even when under-tested

Tension between “Safety teams” and “Product & Growth teams”

Investor pressure pushing toward speed over caution

In other words:

The ethical scaffolding built to protect humans from the machine…

is itself being pushed to the point of systemic strain.

Where does the real danger lie?

The threat is not that “the machine will hate humans,”

but in three simpler — and far scarier — realities:

1️⃣ A widening comprehension gap

Models are now so complex that even their creators no longer fully understand them.

Predicting their behavior becomes harder, especially in open, interconnected environments:

markets

social platforms

financial systems

political influence operations

2️⃣ Weaponization of capability

Everything useful becomes dangerous in the wrong hands:

fully synthetic deepfakes (voice + image + documents)

election or sectarian manipulation

automated cyber fraud and hacking

3️⃣ Blind dependence

The biggest danger is not “AI intelligence”…

but human laziness when we surrender judgment:

a judge relying on a risk-assessment model

a doctor accepting an automated diagnosis

a military operations room trusting analytical models during conflict

The more we rely on AI, the less we verify.

2. The Global Data Crisis

When the planet begins to run out of training fuel

What most people don’t realize is this:

AI has a structural weakness: it is endlessly hungry for data.

Every leap in Large Language Models (LLMs) has required:

more text

more images

more human digital traces

Today, scientific warnings are clear:

Within a few years, the world may exhaust most high-quality public data suitable for training.

What does “running out of data” mean?

Not that the internet disappears —

but that:

The usable portion (clean, rich, diverse, semantically deep) shrinks dramatically

Companies are forced to rely more on:

closed private datasets

medical and financial records

highly sensitive user data

Or turn to synthetic data generated by older models to train newer ones

This leads to three dangerous outcomes:

1️⃣ The closed-loop trap

A new model trained on data generated by an old one.

Potential result?

amplified biases

repeated errors

decline in genuine creativity

a digital echo chamber where the “teacher” and “student” are the same system

2️⃣ The privacy squeeze

As public data dries up, companies turn to private human behavior:

conversations

preferences

biometric patterns

institutional and financial activity

Humans risk becoming raw material, not users.

3️⃣ A black market for data

When good data becomes scarce, you get:

secret deals

cross-border data trafficking

stolen national datasets

intelligence agencies competing for high-value training data

3. When the Two Risks Converge

Unrestrained intelligence… and a dwindling human fuel supply

Combine the two pictures:

weakening AI safety

shrinking global training data

And the world faces existential questions:

1️⃣ Who enforces ethics when data itself becomes a strategic commodity?

Will governments prioritize safety…

or commercial and geopolitical advantage?

2️⃣ What if countries seal off their data (Data Sovereignty)?

If nations begin hoarding data to train their own national models,

the world could enter a new global arms race:

Not over weapons…

but over who owns the largest reservoir of human minds encoded as data.

3️⃣ How will media respond?

Should journalism simply report on new model releases?

Or lead a global debate around:

Who monitors?

Who owns?

Who is accountable?

4. The Arab World and Saudi Arabia: Between Risk and Opportunity

If we don’t own our data… we become someone else’s fuel

For the Arab world:

Most Arabic content online is shallow, repetitive, or owned by non-Arab platforms

No major Arabic ecosystem exists that controls the cycle:

Content → Data → Models → Applications

We face two opposite futures:

❌ Either we remain “fuel” for foreign models

with no data sovereignty and no control over outcomes

✅ Or we build:

sovereign Arabic data banks

regional content platforms

AI models that reflect our culture and values

Saudi Arabia sits at the center of this equation

Saudi Arabia is investing in:

hyperscale data centers

sovereign cloud platforms

global AI partnerships

regulatory frameworks for data and digital economy

In the era of “ethical collapse” and “data scarcity,” the Kingdom—if strategically positioned—can become:

a regional hub for data storage and processing

a balanced partner between East and West in AI coalitions

a model that pairs innovation with national values and interests

5. Media in the Storm

Where does BETH stand?

In a world where:

models accelerate

battles over data intensify

public trust collapses

BETH carries both an opportunity and a responsibility:

1️⃣ A newsroom that understands technology without becoming its echo

We analyze AI as a civilizational shift — not a passing trend.

2️⃣ A newsroom that protects awareness, not just news

Data is part of a society’s cognitive security.

3️⃣ A newsroom building an enlightened Arabic archive

One day, this archive will train models that serve people — not swallow them.

BETH Conclusion — Beyond AI… Beyond Data

The world is approaching a dangerous intersection:

ever-expanding artificial intelligence

ever-shrinking human data

Between those who seek dominance, profit, or speed,

the crucial question becomes:

**Who protects the “human of tomorrow” from becoming a mere row in a database…

or a digital mutation inside a model whose purpose we don’t control?**

This is not a call for fear.

It is a call for strategic thinking:

for policymakers

for platform builders

for media leaders

for those investing in the future

AI is not a blind destiny.

It is a tool — one that will serve those who understand it first,

and control it before it slips away from everyone.